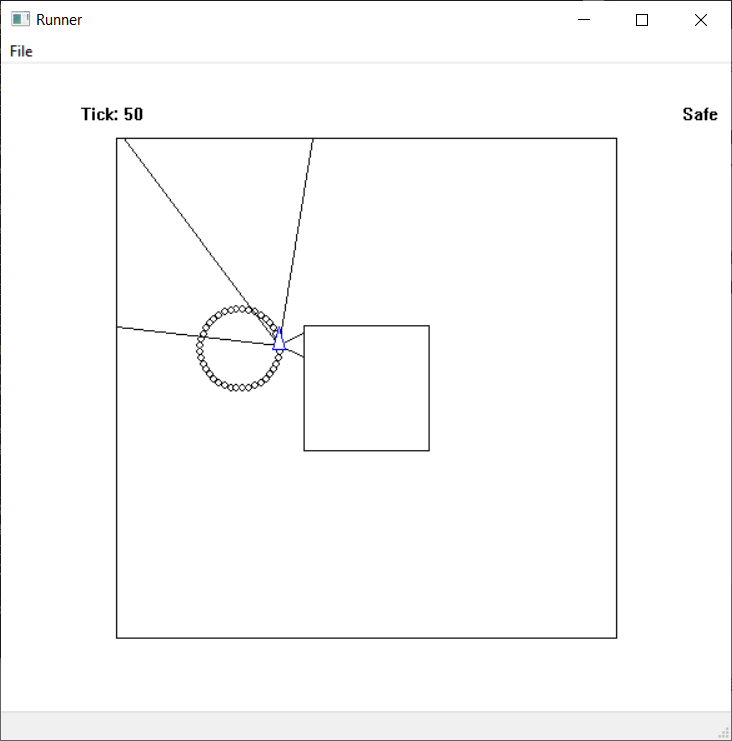

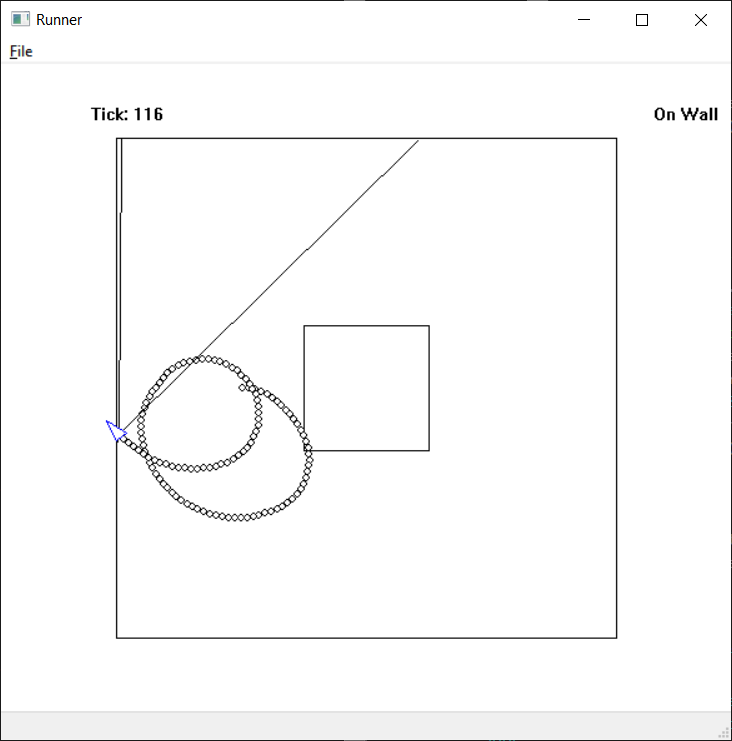

I did some simple work with JSON getting the thinker saved and I should be able to reconstitute them at will. I will confirm this is working when I have something worth saving. I have a Python object that can read the five inputs from the map, run them through the matrices, and spit out an angle that it needs to turn. I even added little tracing circles to show the path of the thing as it “navigates” the maze:

Most of the time the Thinker spits out a 1, but some times it spits out different numbers but they aren’t different enough to make much of a difference. A few tests had the runner spiraling very slowly, but still running essentially in circles. For all of these tests I’m having the thinker object randomize its synapses and biases.

Obviously, these random numbers aren’t enough to make the damn thing do anything interesting.

The first map I have is 400 pixels by 400 pixels, so the distances could be anything from 0 to 400. I need to examine the weights of these randomized thinkers to see if I can find a pattern. While messing with the outputs to format them nicely (I don’t think I need 20 decimal places to analyze the data), I came across this guy:

Scanning the outputs, this guy had two results: either somewhere around 0.13 (rarely 0.122) or around 0.29. The end result is squished between 0 and 1, remember, and then I multiply by 2, subtract 1, and multiply by tau/40. Here’s the JSON output of the randomized thinker:

{"input_count": 5,

"layers": [3, 4],

"output_count": 1,

"synapses": [

[[-0.4914, -0.4220, 0.8770, 0.7782, 0.0148],

[ 0.3659, -0.8196, 0.0923, 0.3097, -0.1648],

[-0.8482, 0.1504, 0.8237, 0.1866, 0.8727]],

[[-0.8820, 0.8718, 0.2187],

[-0.5129, -0.3895, -0.8474],

[-0.7380, -0.5483, 0.5057],

[ 0.2030, -0.8571, -0.8560]],

[[0.7712, 0.5206, -0.8747, 0.9804]]],

"weights": [

[[-115.6903], [-171.2310], [-42.1870]],

[[-0.3743], [0.2523], [-0.1249], [-0.5520]],

[[0.0490]]],

"weight_range": [-200, 0],

"synapse_range": [-1, 1],

"jsoncls": "Thinker"}

The weight_range only takes effect in the first level. My logic was after the inputs are weighted into the first hidden layer, then the values are squished to between 0 and 1, so massive weights may not be useful, I thought. I have yet to find a resource that explains how to come up with sensible values here.

I also printed out the first steps of the calculations, which may also explain what’s going on here.

After doing the matrix multiplication of the first synapses and the inputs, adding the weights, and squishing;

[[2.17313e-62] [2.82042e-71] [1.00000e+00]]

So the first hidden layer results in basically 0, 0, 1. This seems to flatten the whole thing.

The inputs (distances) and final output for the first two steps are:

['200.00', '70.71', '50.00', '70.71', '200.00'] 0.1306522164636137 ['200.77', '254.29', '45.34', '57.42', '201.94'] 0.13065221646348157

The distances are different, but the results are the same. Reviewing the rest of the intermittent calculations, the first round of \sigma(m \cdot i + w) always returns [0, 0, 1] or something so close the math never recovers. I have some numbers with e-106 in this list. When the first round ends in [0, 0, 0] I get a different result (close to 0.29).

That’s the source of the problem, I think. Now I can play around with the weight_range options and see if I get some real variation in actions.